‘an action can be explained by a goal state if, and only if, it is seen as the most justifiable action towards that goal state that is available within the constraints of reality’

\citep[p.~255]{Csibra:1998cx}

Csibra & Gergely (1998, 255)

1. action a is directed to some goal;

2. actions of a’s type are normally means of realising outcomes of G’s type;

3. no available alternative action is a significantly better* means of realising outcome G;

4. the occurrence of outcome G is desirable;

5. there is no other outcome, G′, the occurrence of which would be at least comparably desirable and where (2) and (3) both hold of G′ and a

Therefore:

6. G is a goal to which action a is directed.

We start with the assumption that we know the event is an action.

Why normally? Because of the ‘seen as’.

Any objections?

I have an objection.

Consider a case in which I perform an action directed to

the outcome of pouring some hot tea into a mug.

Could this pattern of inference imply that the outcome be the goal of my action?

Only if it also implies that moving my elbow is a goal of my action

as well.

And pouring some liquid.

And moving air in a certain way.

And ...

How can we avoid this objection?

Doesn’t this conflict with the aim of explaining *pure* behaviour reading?

Not if desirable is understood as something objective.

[explain]

Now we are almost done, I think.

OK, I think this is reasonably true to the quote.

So we’ve understood the claim.

But is it true?

How good is the agent at optimising the selection of means to her goals?

And how good is the observer at identifying the optimality of means in relation to outcomes?

\textbf{

For optimally correct goal ascription, we want there to be a match between

(i) how well the agent can optimise her choice of means

and

(i) how well the observer can detect such optimality.}

Failing such a match, the inference will not result in correct goal ascription.

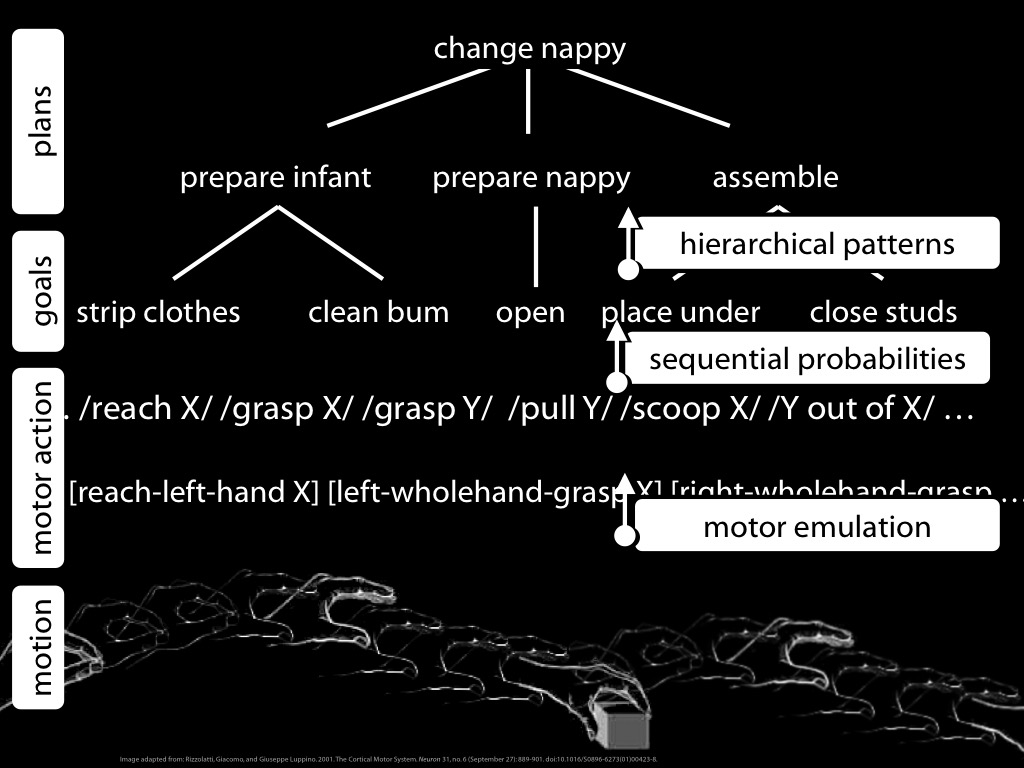

But I don’t think this is an objection to the Teleological Stance as a

computational theory of pure goal ascription. It is rather a detail

which concerns the next level, the level of representations and algorithms.

The computational theory imposes demands at the next level.

‘Such calculations require detailed knowledge of biomechanical factors that

determine the motion capabilities and energy expenditure of agents. However,

in the absence of such knowledge, one can appeal to heuristics that approximate

the results of these calculations on the basis of knowledge in other domains

that is certainly available to young infants. For example, the length of

pathways can be assessed by geometrical calculations, taking also into

account some physical factors (like the impenetrability of solid objects).

Similarly, the fewer steps an action sequence takes, the less effort it might

require, and so infants’ numerical competence can also contribute to efficiency

evaluation.’ \citep{csibra:2013_teleological}

So this is the teleological stance, a computational description

of goal ascription.

Although this is rarely noted,

I think the Teleological Stance takes us beyond Dennett’s intentional stance

because it allows us to distinguish between people on the basis of what

they do.

You reach for the red box; your goal is to retrieve the food.

I reach for the blue box, so my goal is to retrieve the poison.

But there is a problem for the Teleological Stance ...